-

103

Views

-

0

Comments

-

0

Like

-

Bookmark

AI infrastructure boom: Oracle & CoreWeave lead

Oracle, AMD, and CoreWeave lead a $700B AI infrastructure surge in 2026. High power demand and grid limits now shape the future of GPU deployments.

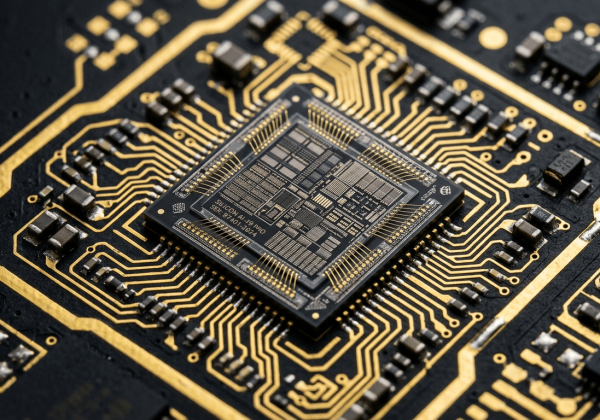

The technology sector continues to see strong investor interest in artificial intelligence infrastructure, driven by a wave of major cloud and data-center announcements. While broader economic conditions include elevated interest rates, the AI compute segment is experiencing rapid capital deployment. Recent multi-billion-dollar deals have highlighted both surging demand for GPU capacity and the operational challenges of scaling physical infrastructure.

Oracle and surging cloud infrastructure demand

Oracle Corporation has reported a record remaining performance obligations (RPO) backlog of $553 billion, reflecting strong long-term commitments for AI training and inference workloads on its Oracle Cloud Infrastructure (OCI). The company has secured multi-billion-dollar contracts with major AI players including OpenAI, Meta, NVIDIA and xAI. Oracle is expanding its Gen2 Cloud offering and plans to invest up to $50 billion in AI infrastructure in fiscal 2026, including deployments of thousands of AMD and NVIDIA accelerators. Demand for GPU-based capacity remains robust, supported by customer-funded build-outs.

Specialized providers and debt financing

CoreWeave has closed an $8.5 billion delayed-draw term loan facility (initial draw capacity of approximately $7.5 billion, expandable to $8.5 billion upon asset stabilization). The financing, anchored by Blackstone Credit & Insurance with participation from global institutions, will support further expansion of its GPU fleet and data-center footprint to meet AI customer contracts. This transaction underscores the growing role of specialized AI cloud providers alongside traditional hyperscalers.

AMD's positioning in the AI accelerator market

Advanced Micro Devices (AMD) continues to gain traction in the AI silicon market with its Instinct accelerator family. The MI300X remains in production deployments, while the MI350 series (including MI350X and MI355X) is ramping in 2026 with improved performance and memory capacity. Key wins include Oracle superclusters, Microsoft Azure production workloads, Meta, OpenAI (up to 6 GW of next-generation capacity starting with MI450 in H2 2026) and multiple neocloud providers. AMD's ROCm software stack is maturing, enabling broader adoption as cloud operators seek multi-vendor supply chains.

Record capital expenditure by hyperscalers

The leading hyperscalers (Amazon, Google, Meta, Microsoft and Oracle) are projected to spend between $630 billion and $700 billion in capital expenditures in 2026 - an increase of approximately 60% year-over-year. Roughly 75% of this spending is directed toward AI infrastructure, including GPUs, networking and data-center construction. This represents the largest single-year investment cycle in cloud computing history and supports the shift to specialized GPU-as-a-Service models for large language model developers.

Energy and power-grid realities

AI-specific data centers require significantly higher power density than traditional cloud facilities. Global data-center electricity consumption is forecast to approach 1,050 TWh in 2026. In the United States, data centers could consume between 6.7% and 12% of total electricity by 2028. Power-grid access, transformers and cooling infrastructure are now among the primary constraints on new deployments, with analysts noting potential shortages emerging in 2027-2028 in key markets. These physical limits are shaping the pace and geography of AI infrastructure rollout.

Key takeaways

- Oracle Corporation reported a record remaining performance obligations (RPO) backlog of $553 billion, driven by long-term AI cloud contracts with OpenAI, Meta, NVIDIA, xAI and others. The company plans up to $50 billion in AI infrastructure capital expenditure in fiscal 2026.

- CoreWeave closed an $8.5 billion delayed-draw term loan facility (initial capacity ~$7.5 billion) in March 2026, anchored by Blackstone Credit & Insurance, to expand its AI GPU fleet and data centers.

- AMD's Instinct MI300X accelerators are in production at Oracle, Microsoft Azure, Meta and other providers; the MI350 series is ramping in 2026, with OpenAI committing to up to 6 GW of next-generation AMD capacity (MI450 rollout starting H2 2026).

- Hyperscalers (Amazon, Google, Meta, Microsoft, Oracle) are projected to spend $630-700 billion in capital expenditures in 2026, with ~75% allocated to AI infrastructure - a ~60% year-over-year increase.

- Cloud providers are expanding specialized GPU-as-a-Service offerings to meet demand from large language model developers, while global data-center power consumption approaches 1,050 TWh in 2026, creating grid and cooling constraints.